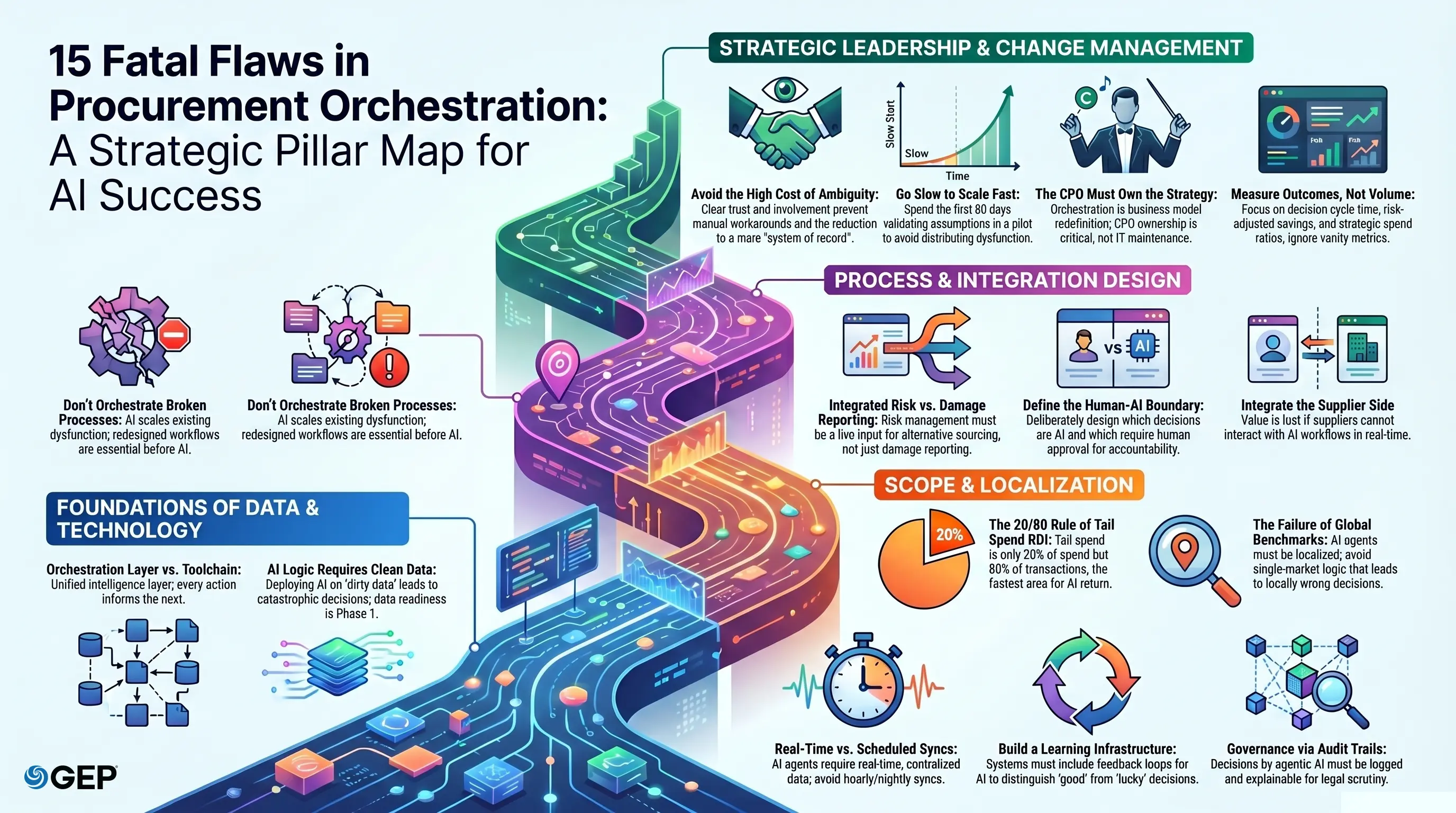

15 Devastating Procurement Orchestration Mistakes (& How to Avoid Them)

- Orchestration is built incrementally, not deployed in a single program.

- Most organizations that believe they've modernized procurement have simply digitized their old dysfunction and call it transformation.

- Orchestration, when done wrong, can lead to bad decisions made at scale (and fast).

May 19, 2026 | Procurement Strategy 8 minutes read

The pressure on procurement leadership has never been more unforgiving. Supply chains can snap without warning as more margins are squeezed and boards demand transformation on a timeline that leaves no room for half-measures.

The organizations that don’t just survive this but thrive are rearchitecting how procurement decisions are made, at speed and at scale. That rearchitecting is procurement orchestration, and it may be the most consequential capability your enterprise either builds or ignores in the next three years.

This article walks you through the 15 most common and most costly mistakes procurement leaders make when attempting orchestration at scale, and what to do instead.

What Is Procurement Orchestration?

Procurement orchestration is the intelligent coordination of every procurement activity through a unified operating model that connects data, decisions, and actions in real time. It's not a platform you procure and install but a capability you design from the ground up.

From need identification to supplier payment, every procurement touchpoint feeds a central intelligence layer that learns, adapts, and acts on your organization's behalf.

Agentic AI is what separates modern procurement orchestration from what came before it.

Unlike traditional automation, which follows rules, executes tasks, and stops, agentic AI operates with autonomous reasoning. It perceives context, evaluates options, takes action, and course-corrects based on outcomes.

AI agents can independently negotiate within defined parameters, identify and flag supplier risk before it surfaces as a crisis, dynamically reroute sourcing decisions when a market shifts, and continuously optimize spend across categories, all without a human initiating each step.

These AI agents are orchestrated together within a unified data environment to make the procurement function predictive as a whole and far more competitive than basic automation.

Ready to put AI agents to work?

Explore what AI Agents can do for your procurement team

15 Common Mistakes Made by Leaders While Trying to Achieve Procurement Orchestration at Scale

Real procurement orchestration is an end-to-end operating model, where AI agents don't just assist but actively coordinate across sourcing, contracting, supplier management, and risk in real time, without waiting for someone to open a ticket.

The reason most leaders are getting this wrong is that they're shopping for features when they should be architecting capabilities, confusing automation with orchestration, and dramatically underestimating what agentic AI makes possible when it's deployed with intent.

Here are some common mistakes that most enterprise leaders make in procurement orchestration.

1. Letting IT Own What the CPO Must Lead

When procurement orchestration gets handed to technology teams as a deployment project, it dies quietly, not from technical failure, but from strategic irrelevance. IT can build the infrastructure, but can’t make organizational tradeoffs, redefine decisions, or hold category leads accountable to a new operating model.

Without the CPO owning outcomes and the C-suite visibly sponsoring the shift, every competing priority will outrank it. You'll end up with an expensive system that no one is actually accountable for using, and a transformation initiative that quietly becomes a line item on a maintenance contract.

2. Deploying AI on Top of Broken Processes

Orchestration amplifies whatever it's built on. Orchestrating a broken process doesn't fix it. AI institutionalizes the dysfunction and scales it.

Before any AI agent touches a procurement workflow, ask whether that workflow should exist in its current form at all. The answer, most of the time, is no. If the foundation is fragmented, the output will be, too.

3. Mistaking a Toolchain for an Orchestration Layer

Building an RFP tool, layering on a contract scanner, connecting a spend analytics dashboard, and calling the result "orchestration" is one of the most expensive misconceptions in enterprise procurement today.

These tools don't share live data, don't adapt to each other's outputs, and don't optimize toward a shared outcome. They just create a more sophisticated version of the same fragmentation you were trying to escape.

Orchestration isn't a stack of connected tools. It's a unified intelligence layer where every action informs the next decision, and AI agents operate across the full procurement lifecycle without handoff gaps.

4. Launching AI Agents on Dirty Data & Then Wondering Why They Fail

Agentic AI reasons from the data it has. With the wrong data, AI agents will make operationally wrong decisions at scale, fast. The more autonomous your orchestration layer becomes, the more catastrophically bad data propagates.

Leaders who treat data readiness as something to fix "in Phase 2" are building an orchestration initiative on a foundation that will collapse under its own weight.

5. Underestimating Change Management in an AI-First Environment

You can design the most intelligent procurement orchestration layer available, and your team will find seventeen workarounds within 30 days if they don't understand it, don't trust it, or weren't involved in defining how it works.

This isn't resistance but general behavior in the face of ambiguity. When people don't know which decisions the AI owns, they hedge by maintaining parallel manual processes. Those processes become the real operating model, and your orchestration investment becomes a system of recordkeeping that nobody actually uses to make decisions.

6. Leaving the Human-AI Boundary Undefined & Paying for It in Accountability Gaps

Not every procurement decision should be AI-orchestrated, and not knowing which ones shouldn't is a critical design failure. When this boundary isn't deliberately drawn, AI agents either overreach into decisions they shouldn't touch or underperform because they're waiting for approvals they should have autonomy over. Accountability disappears in the ambiguity.

7. Confusing Data Syncs with Real-Time Intelligence

Every enterprise software vendor will tell you their platforms are "integrated." What they mean, in most cases, is that data moves between systems on a scheduled sync - hourly, nightly, or whenever someone runs a report. That is not orchestration. Your AI agents are making decisions on old data.

Without real-time, centralized, and structured data, you’re working on a more expensive and integrated version of the same fragmentation problem.

8. Measuring How Much You've Automated Instead of What You've Actually Changed

Transaction automation volume is the most seductive and least meaningful metric in procurement orchestration. It tells you how many steps your system is executing, not whether the outcomes are better, faster, or smarter.

The metrics that reveal whether orchestration is actually working are decision cycle time, risk-adjusted savings realization, contract compliance rates, and the ratio of strategic spend under active management. Everything else is vanity data.

9. Building an Orchestration Model Neglecting Supplier-Side Integration

Procurement doesn't end when a purchase order is issued. It ends when a supplier delivers, performs, and gets paid; and every step of that cycle is a data source your orchestration layer needs.

When suppliers can't interact with your AI-driven workflows in real time, the intelligence of your orchestration model degrades, leaving actual value creation to chance.

10. Starving AI Intelligence of Learning Infrastructure

Buying an AI-enabled procurement platform is not the same as building an intelligence layer. The difference is whether your system is getting smarter with every decision it makes or simply executing the same logic it was configured with.

Without feedback loops that capture decision outcomes, continuous model refinement, and mechanisms to distinguish between a good decision and one that just happened to work, your orchestration capability has a fixed ceiling. It will never outperform what it was on day one.

11. Letting Tail Spend Stay Invisible Because It Feels Too Small to Prioritize

Tail spend is often 20% or more of total spend, involving up to 80% of suppliers and transactions. It is often fragmented and unmanaged, and most non-compliant purchases result in material losses.

It's also the part of your spend base where agentic AI delivers the fastest, most autonomous ROI, because the decisions are high-frequency, low-ambiguity, and well-suited to defined rules and learned patterns.

Leaders who deprioritize tail spend often overlook unmanaged categories where operational leakage, compliance gaps, and supplier risk accumulate fastest per dollar spent.

12. Treating Risk Management as a Separate Workstream

The gap between knowing about a supplier risk and acting on it within your procurement workflows is where supply chain disruptions arise.

When risk intelligence isn't a live input to your orchestration model: dynamically surfacing, escalating, and triggering alternative sourcing pathways without human initiation, you're managing risk retrospectively. And in volatile markets, it’s just well-organized damage reporting.

13. Building Agentic AI Autonomy Without Any Audit Trail

Agentic AI takes consequential actions and makes compliance determinations. Every one of those actions needs to be logged, attributable, and explainable. It needs to be in the specific format your legal team, external auditors, and regulators will require when something goes wrong.

Leaders who defer governance will end up with a system that cannot be audited, defended, or trusted with greater autonomy over time.

14. Scaling an Orchestration Model Before It Actually Works

Scaling agentic orchestration before it achieves stability is how dysfunction gets distributed at enterprise scale rather than efficiency. Every assumption you haven't validated in the pilot becomes a failure mode in the rollout. And every unaddressed data gap in one category becomes a systemic gap across all of them.

The organizations that scale orchestration fastest are almost always the ones that went slowest in the first 90 days, because they built something that worked before they scaled it.

15. Applying a Global Benchmark Standard to Every Market

Perhaps the most devastating mistake is designing your procurement orchestration model around a single global standard and assuming it travels.

Orchestration that fails to localize its intelligence layer to regional market structures, supplier maturity levels, currency and regulatory volatility, and cultural procurement dynamics isn't global.

What works in a mature, heavily digitized supply market bears almost no resemblance to what works in a region where supplier data is sparse, regulatory frameworks shift without warning, payment norms are relationship-driven, and informal networks carry more weight than formal contracts.

When your AI agents are trained on benchmarks, compliance logic, and decision rules built for one operating environment and then deployed into fundamentally different ones, they don't underperform modestly. They make confident, well-reasoned, and locally wrong decisions at scale.

The Agentic AI Playbook for Procurement Pros

Your roadmap to move from pilots to production, with AI that adapts, learns, and delivers

Also Read: Bridging the Procurement & Supply Chain Gap with AI-Powered Orchestration

Best Practices for Procurement Leaders

- Before selecting any technology, define the outcomes you need to drive, not the features you want to activate.

- Work backward from the decisions your procurement function makes, identify the data those decisions require, and design the integration model that makes that data available in real time to your team and AI agents.

- Data readiness deserves to be treated as a strategic initiative, not a technical cleanup project.

- Define the human-AI decision boundary with precision and deliberateness.

- For each category of procurement decision, determine whether AI should be acting autonomously or surfacing information for human judgment.

- Change management should be treated with the same rigor as your technical implementation.

- Invest in role-specific training, build feedback mechanisms that allow teams to flag when AI decisions seem wrong, and ensure executive visibility is consistent and genuine.

- Build for auditability before you build for autonomy. Every action an AI agent takes should be logged, attributable, and reviewable.

The Orchestration Imperative: What's Coming and What You Must Do Now

In the years ahead, agentic AI will move progressively from augmenting procurement decisions to driving them. Procurement leaders have already begun building the intelligence layer that connects them into a coherent, adaptive system.

Procurement orchestration isn't a destination you arrive at. It's a capability you compound. Avoiding the 15 mistakes outlined in this blog requires deliberate design, executive alignment, and the discipline to stabilize before you scale.

The compounding effect of a well-orchestrated procurement function on cost structure, cycle velocity, risk posture, and supplier relationships is an underestimated strategic lever available to enterprise leadership right now.

Ready to orchestrate procurement from scratch? Talk to an expert today.